Meta has launched Muse Spark, a new AI model from its Superintelligence Labs. The system is designed to power “personal superintelligence” across its apps. According to Meta, the model is multimodal and capable of coordinating multiple AI agents to complete tasks.

The company says Muse Spark will be rolled out in the Meta AI app and the Meta AI website in the U.S., with wider integration planned across its major platforms, including WhatsApp, Instagram, Facebook, Messenger, and Meta’s smart glasses.

Meta’s Chief AI Officer Alexandr Wang confirmed the update in an X Post, calling it “the most powerful model that Meta has released.”

What Muse Spark does inside Meta’s ecosystem

Earlier models like Llama were largely open source and aimed at developers. Muse Spark is more tightly controlled and built for direct use inside Meta’s own products.

Meta says Muse Spark is a multimodal AI model. It can work across different types of inputs such as text and other media formats. The company claims the model is designed to coordinate multiple AI agents at the same time.

This suggests users will not be interacting with a single-response chatbot in isolation. Instead, Muse Spark is positioned to manage multiple tasks through connected AI agents that can operate across different steps of a request.

Two modes inside Meta AI: Instant and Thinking

According to Meta, the rollout starts with a redesigned interface and expanded capabilities. Meta says Meta AI now operates with two modes. The first is “Instant” mode, which is used for short and direct answers. The second is “Thinking” mode, which is used when a query requires more steps or deeper processing before a response is returned.

The system allows users to switch between both modes depending on the type of question being asked. For example, a direct factual question would be handled in Instant mode, while a question involving comparisons or multiple parts would be handled in Thinking mode.

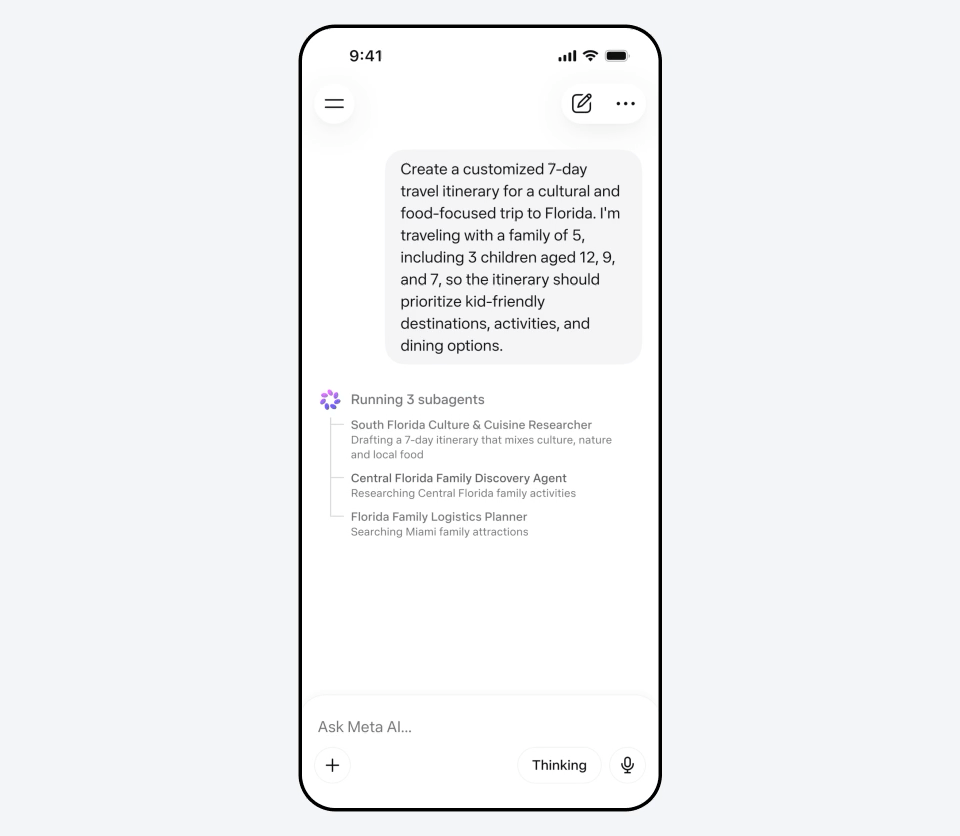

Multiple sub-agents working on one request

Muse Spark introduces a structure where Meta AI can split a single query into multiple sub-tasks and assign them to different AI agents. Meta explains this using a travel planning example. In that case, one agent can draft an itinerary, another can compare locations, and another can search for activities. Each runs at the same time, and the results are combined into a single response.

Instead of handling a request in a single pass, the system distributes parts of the task across different processes.

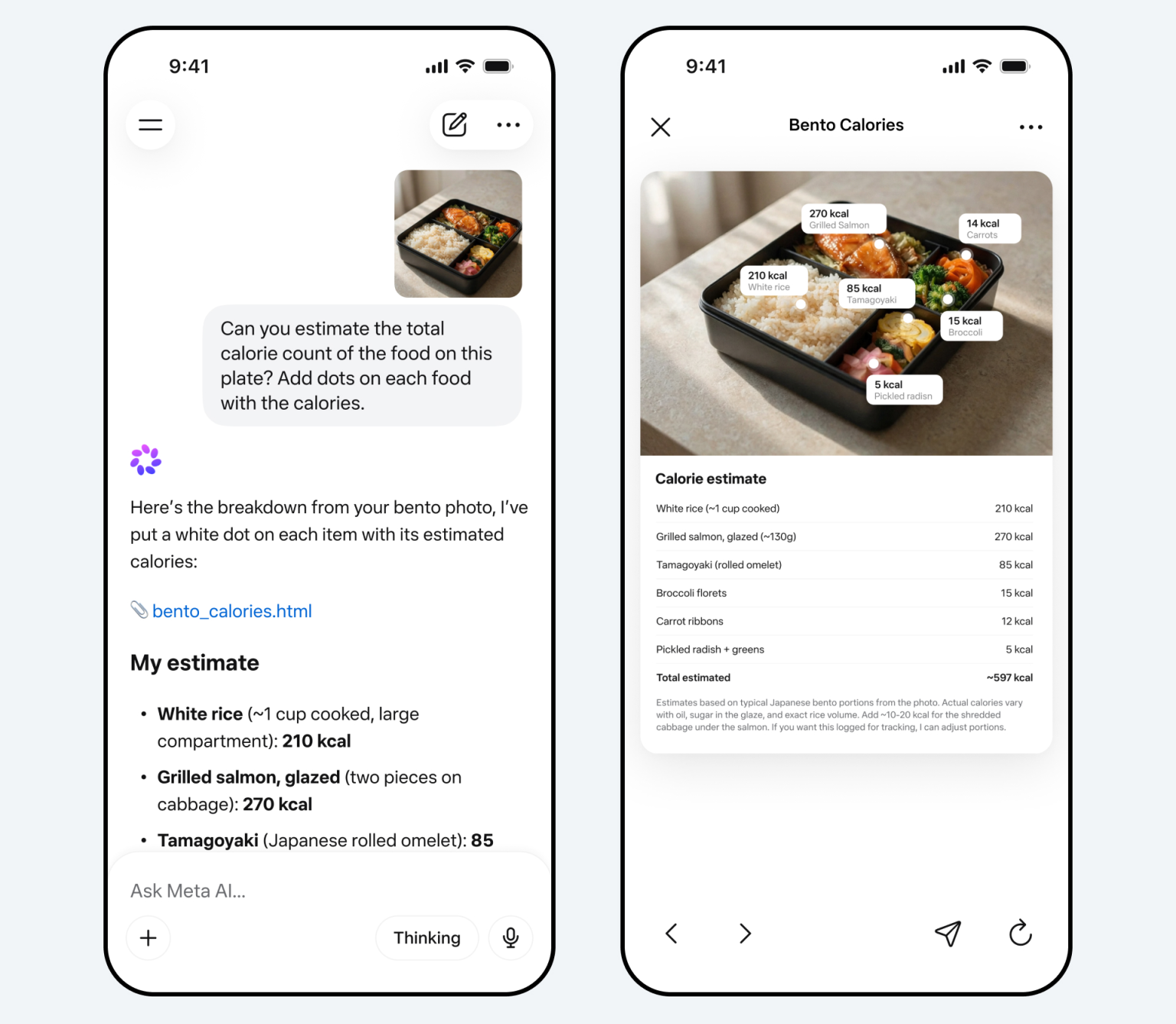

Image-based inputs and visual understanding

The system now supports image inputs alongside text. Users can upload or capture images and ask questions based on what is shown. For example, a photo of packaged snacks can be used to identify items and compare their nutritional content.

The same applies to product scans, where Meta AI can describe what is in the image and place it alongside similar items for comparison. This moves the system from text-only input to combined text and image queries in one interaction.

Shopping and discovery become part of AI interactions

Another key part of Muse Spark is its integration with shopping and product discovery. Meta AI also extends into shopping and lifestyle-related queries. The model can suggest products, generate recommendations, and simulate how items might look or fit based on user input.

According to Meta, with Muse Spark, users can decide what to wear, how to style a room, or what to buy for someone. It draws from content and inspiration already circulating across Meta’s apps, including posts from creators and communities.

For example, a user asking for outfit ideas might receive suggestions based on styling trends seen in posts from people they follow or engage with.

This same structure applies to product discovery, where the AI uses existing content signals rather than treating the request in isolation.

Support for science, math, and health-related questions

Meta also says Muse Spark can respond to more complex questions in areas like science, math, and health. In these cases, Meta claims the system will handle structured problems rather than simple factual queries. It can also interpret images and charts that are part of the question.

For example, a user could upload a chart or diagram and ask for an explanation. The system would then break down the visual information and respond based on what is shown. Meta frames this as part of its broader reasoning capability inside the AI system.

Context from posts and location-based content

Meta AI can also pull contextual information from public posts when users ask about places or trending topics. Meta claims the system combines these signals with the user’s question to produce a contextual response based on available content across the apps.

For example, a query about a location can surface public posts from users who have shared content from that area. A trending topic query can bring in related discussions from across Meta’s platforms.

A response to growing competition in AI

Muse Spark arrives in a period where Meta is increasing investment in AI infrastructure while competing with companies such as OpenAI, Claude, and Google in model development and deployment. Large tech companies are scaling both models and infrastructure at the same time, while focusing on integrating AI into existing consumer platforms rather than standalone tools.

Reports around the launch note that Meta has expanded spending on computing systems and reorganized parts of its AI division under Superintelligence Labs. The unit was formed as part of a broader restructuring effort and includes leadership from Alexandr Wang following Meta’s $14.3 billion investment in Scale AI.

The company is investing heavily in infrastructure, talent, and product integration. In its last earnings report, Meta said capital expenditures range from $115 billion to $135 billion.

Muse Spark is an early step in that process. It shows how Meta plans to bring AI directly into its platforms, where billions of users already engage with content daily.

Meta says future versions of Muse Spark will be made publicly available. A private API preview is also opening to select partners, with an enterprise API positioned as a future revenue stream.

Recap

What is Muse Spark and what does it do?

Muse Spark is a new AI model built by Meta Superintelligence Labs, the team Meta assembled to develop its next generation of AI. The model now powers Meta AI, the assistant built into Meta's apps. It can answer questions quickly, handle complex multi-step tasks, read and describe images, generate websites from text descriptions, and answer health-related image queries. More than 1,000 physicians contributed to developing its health image capabilities. Meta plans to make future versions publicly available; the initial release is not.

What does Muse Spark's shopping mode do for brands?

Muse Spark includes a shopping mode that surfaces product comparisons with purchase links, drawing from creator content across Instagram, Facebook, and Threads. A user can describe what they are looking for inside a Meta AI conversation, and the assistant returns relevant product options with links to buy. Meta has not disclosed how sponsored products interact with shopping mode, or whether recommendations are organic, paid, or a combination.

Where is Muse Spark available?

Muse Spark currently powers the Meta AI app and meta.ai in the US. Meta plans to extend it to WhatsApp, Instagram, Facebook, Messenger, and Ray-Ban Meta glasses in the weeks following the April 8 launch. No timeline for international expansion has been announced.